AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Link sequential program with multithread mkl12/31/2023

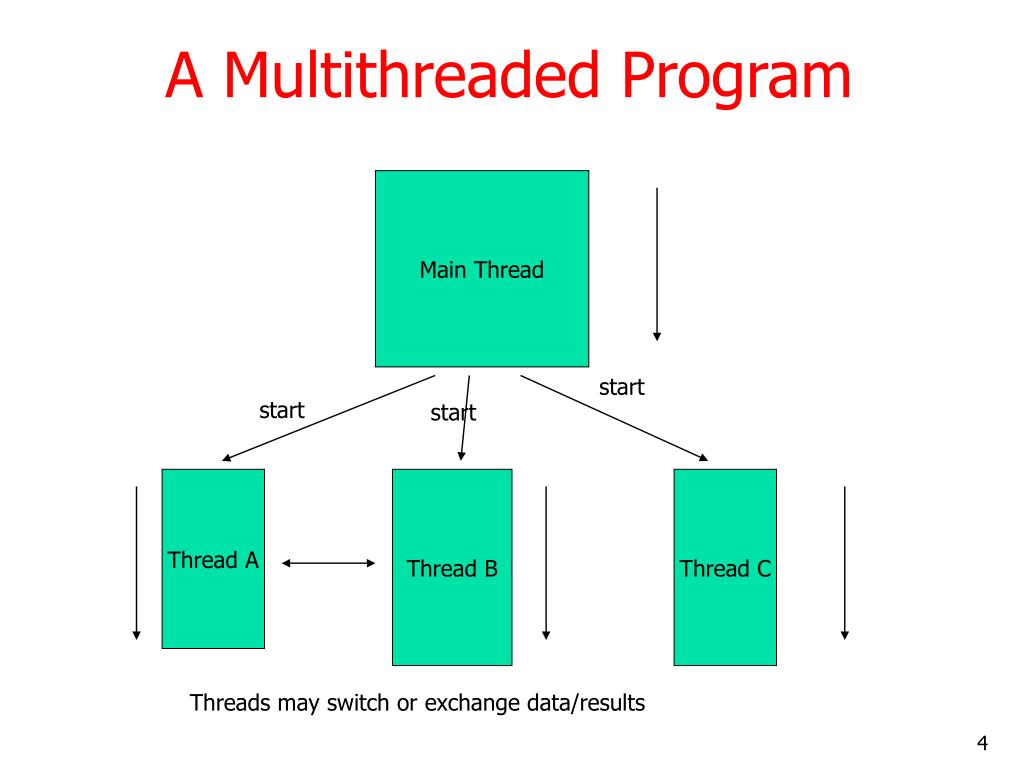

Where is MKL used? As mentioned here MKL_WITH_TBB failed to found the latest TBB 2018 on Windows #11383, BLAS library are not needed anymore for DNN in OpenCV 3.3 but I would expect that LAPACK functionality is still needed given that the option WITH_LAPACK is still there but even the PCA is not using LAPACK for either SVD or covariance matrix + eigenvecs computation. What is the best solution will clear depend on the amount of work being done in the dgemm operation compared to the synchronization overhead for fork/join, which in mostly dominated by the thread count and the internal implementation.- OpenCVFindMKL.cmake 22:42:46.618955024 +0000 +++ new_OpenCVFindMKL.cmake 23:12:36.267540713 +0000 -97,22 +97,11 VERSION_GREATER "11.3.0" OR $ is not supported") The additional joins and forks will also introduce some overhead, but it may be better than the over-subscription of the initial solution with the single construct or solution 1. The downside is that the thread has to wake up from this deeper sleep state, which will increase the latency compared to the spin-wait.įor solution 2, the threads are kept in their spin-wait loop and are very likely actively waiting when the dgemm call enters its parallel region. Intel fotran compiler support OpenMP definitely. If with intel MKL, you may work with ifort + MKL on Windows OS directly. Another possibility, as you know, Intel MKL are part of Intel Fotran Composer Suite for windows. o mklintelc.lib mklsequential.lib mklcore.lib. exeĢ) Modify the code to split the parallel regions: #pragma omp parallelįor solution 1, the threads go to the sleep mode immediately and do not consume cycles. Or try 32bit directly, > gfortran -fopenmp -o mycode. There are essentially two solutions to this problem:ġ) List item Use the code as above and in addition to the suggested environment variables also disable active waiting: $ MKL_DYNAMIC=FALSE MKL_NUM_THREADS=8 OMP_NUM_THREADS=8 OMP_NESTED=TRUE OMP_WAIT_MODE=passive. What will happen is that even if the dgemm call creates additional threads inside MKL, the outer-level threads will still be actively waiting for the end of the single construct and thus dgemm will run with reduced performance. Instead, the threads will enter a spin-wait loop and continue to consume processor cycles while they are waiting. The reason is that most OpenMP implementations do not shutdown the threads when they reach a barrier or don't have work to do. Training and running dnns on CPU with MKL Multithreaded is slower than with MKL Sequential 1303 Closed Zyrin opened this issue on 7 comments Contributor Zyrin commented on Version: 19.8 + 19.10 Where did you get dlib: Platform: Windows 7 + 10 64 bit and Ubuntu 16.04. The above answer is correct from a function perspective, but will not give best results from a performance perspective. While this post is a bit dated, I would still like to give some useful insights for it. Though I would prefer to use environment variables instead. You could also set the parameters with the appropriate calls: mkl_set_dynamic(0) Therefore I would assume that your case is the former.Īnd run with: $ MKL_DYNAMIC=FALSE MKL_NUM_THREADS=8 OMP_NUM_THREADS=8 OMP_NESTED=TRUE. If each thread processes its own private data, then you don't need any synchronisation constructs, but using multithreaded MKL won't give you any benefit too.

If the data to be processes with dgemm_ is shared, then you have to invoke the latter from within a single construct.

You can link or copy this script to a place where users can invoke it. Once that is sorted out, you should enable nested parallelism by setting OMP_NESTED to TRUE and disable MKL's detection of nested parallelism by setting MKL_DYNAMIC to FALSE. On other systems you need to have the gzip program installed, when you can use. The reason for that restriction is that MKL uses Intel's OpenMP runtime and that different OMP runtimes do not play well with each other. Some limitations and additional requirements: Intel® oneAPI Math Kernel Library for DPC++ only supports using the mklintelilp64 interface library and sequential or TBB threading. It is supported by MKL, but it only works if your executable is built using the Intel C/C++ compiler. Use the oneMKL Link Line Advisor to configure the link command according to your program features.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed